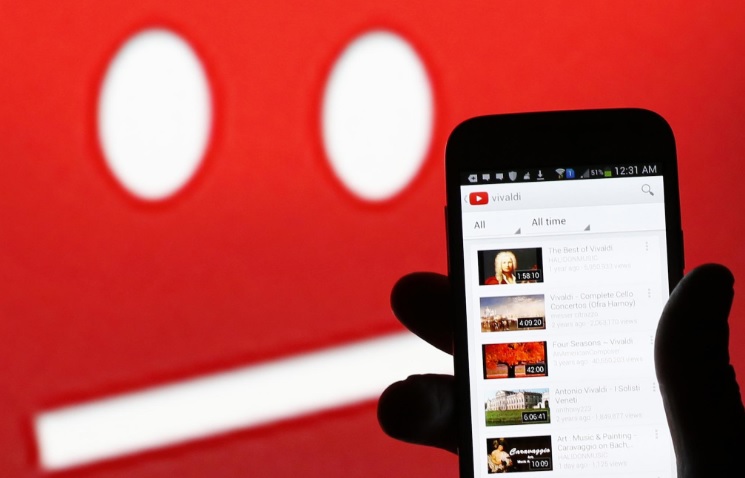

YouTube is very actively filtering misinformed, rumors and videos with dangerous stunts and challenges. Very recently, YouTube has u pdated their privacy policy and they have pledged in taking stringent actions against YouTubers who post dangerous pranks, deadly challenges like Bird Box challenges, etc. Now the biggest video streaming platform is working on replenishing the video recommendation algorithm and reducing content with misinformation and misleading videos.

There are certain videos that are not outright violent and do not violate their Community Guidelines directly. But those videos are misleading viewers and thus on a borderline of violating their Community Guidelines. For example, videos which have thumbnails and titles saying, “You won’t believe what happens next”, videos having content that claims to cure skin disease or some other diseases, videos claiming misinformation like the national anthem of a particular country has been declared as the best in the world, etc.

YouTube will ameliorate their algorithms and will work towards limiting those videos to appear in the recommendations. If the recommendations filter out the videos, then the videos will tend to circulate less. Thus the videos will not spread like fire, and at the same time they will remain on YouTube, in case they do not violate YouTube user guidelines. Here’s what YouTube said:

We’ll continue that work this year, including taking a closer look at how we can reduce the spread of content that comes close to—but doesn’t quite cross the line of—violating our Community Guidelines. To that end, we’ll begin reducing recommendations of borderline content and content that could misinform users in harmful ways—such as videos promoting a phony miracle cure for a serious illness, claiming the earth is flat, or making blatantly false claims about historical events like 9/11.

While this shift will apply to less than one percent of the content on YouTube, we believe that limiting the recommendation of these types of videos will mean a better experience for the YouTube community. To be clear, this will only affect recommendations of what videos to watch, not whether a video is available on YouTube. As always, people can still access all videos that comply with our Community Guidelines and, when relevant, these videos may appear in recommendations for channel subscribers and in search results. We think this change strikes a balance between maintaining a platform for free speech and living up to our responsibility to users.

This change relies on a combination of machine learning and real people. We work with human evaluators and experts from all over the United States to help train the machine learning systems that generate recommendations. These evaluators are trained using public guidelines and provide critical input on the quality of a video.

Leave a Reply